- CALL ANYTIME : (+598) 95390490

- info@thecodelight.com

- Home

- Our Blogs

- April 29, 2022

- Alex Rodríguez

Introduction guide to Dialogflow.

The tool comes from API.AI, which was purchased a few years ago and is currently sold as a SaaS service, where agents are configured to provide conversational services through APIs and integrate with various platforms.

In this post, I will not replace the official documentation, here it is, but I will try to be practical about how to use Dialogflow.

I will show a series of tests with Google Assistant, the assistant we use on Android phones, Google Home home devices, cars with Android Auto and more. There is also the potential to use Dialogflow with native mobile apps, web pages... and, of course, environments that can talk to users using voice as a more natural interface continue to grow.

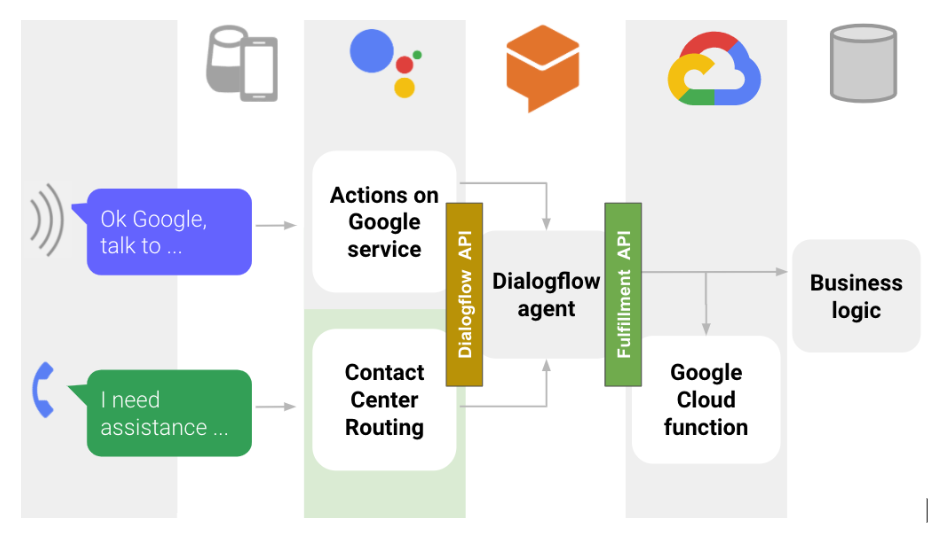

This graphic summarizes the flow of the different pieces to consider when creating a bot that we talk to from a device like a Google Home:

Let's start with the important definitions:

An Actions on Google "action" is an application published so that we can interact with it from any Google Assistant client. We will tell you later how to publish our actions.

A Dialogflow "agent" is a single conversation engine published via one or more of the Dialogflow integrations, or consumed from its API.By Alex Rodríguez

This agent is linked to a Google Cloud project, and we will see later that it can use additional resources in Google Cloud, or elsewhere, to perform part of its functions.

A Google Cloud "Cloud Function" is a piece of code, we generally use Dialogflow using node.js, but other languages are also available, we can use it for several things, and Google Cloud Services will take care of the implementation, automatically scalable, which provides a high availability standby. For Dialogflow, we will use Cloud Functions to run our agent, that is, maintain its logic and process data to provide detailed responses to our users.

If we move on to look at Dialogflow in a little more detail, within an agent we have to use several additional concepts:

An Intent is a user interaction with a concrete meaning that results in an action or response from our bot. In general we have to define an intent for each thing that the user can say, regardless of the moment of the conversation in which we are, but not based on the answers we want to give, which will be built with the logic of our agent. Here this video explains a little more the concept of what we have seen before.

A training phrase is an example of a sentence that the bot user can say, and that is used to build a Machine Learning model based on natural language processing. Thanks to this operation it is not necessary to define hundreds of examples of use with different variants of the language, but our bot will be able to interpret cases similar to those provided automatically. The normal for a productive environment is to define between 20 and 40 sentences for each intent.

You can define literal and example training phrases, as we will see in a later post.

Entity allows us to extract values from training phrases, such as date, location, quantity, etc., so that we can use these values in our bot. Some entities are defined at the system level, such as date, city, etc., and others we can define based on the bot's theme. In the latter, we can define synonyms for each entity and entity type, for example, we can have an entity called vehicle where one type is a car and the other a motorcycle. For car, we can use the synonyms car, automobile, etc.

Compound entities can be created by mixing two previously defined entities, for example red car, being car a type of vehicle and red a color, and additionally we can create user entities, which last only 10 minutes within a user's session, and that we will create by programming in our bot.

The main advantage of the entities is that when defining a "training phrase" it is only necessary to label the entity to contemplate all possible types and synonyms of that entity, so we greatly reduce the need for additional phrases. Here I leave this video that has additional information.

The parameters of an intent are variables that are captured based on entities, and that we can use later in our conversation. We can make these parameters mandatory, in which case the bot will ask us for those parameters before launching the response of our intent.

The response of an intent is defined together with the intent if it is a simple response that we can express with a predefined text and parameters, but if we need to process information, create more elaborate conversation flows, etc., we will have to resort to a programming that determines the next action or response to be performed when an intent is fulfilled.

This is what we call a "fulfillment", which handles the logic that defines our robot. We can create it as a web service programmed completely externally, or we can use the code editor provided by Dialogflow, using node.js which allows us to easily implement a Google Cloud function. We will use the latter method in a later article.

The context is the way to tell the bot where in the conversation a certain intent is located.

For example, when we detect that a user wants to make a reservation we will define a context called "reservation". By setting this context as the input context of an intent, we will get that specific fulfillment to be activated only when the context has been previously defined.

A clear example of the use of contexts are the yes/no response intents. If our conversation with the user contains several questions that will be answered with yes/no, we will have to define as many yes/no intents as possible answers, each one with a different context, since each one is in a different point of the conversation and will have a different answer to continuation.

Follow-up intents allow us to restrict certain intents to only be detected nested within a conversation flow. In several places I have read that it is better not to use them, although I don't know the reason.

In this third and last video we explain the contexts and how to define the flow of a conversation thanks to them. Later we will see how to create a graph with the flow of our conversation, contexts, parameters, etc., it is very useful when we create our agent.

As a last definition we have the event, which are actions predefined in the system or defined by us in the fulfillment, that allow us to execute a specific intent. In fact, all bots start running through an event, in the case of Google Assistant the call to the agent will generate the "Welcome" event, which by default triggers the intent called "Default Welcome Intent".

This article is just a summary of what dialogflow is and what it does, I hope it helps you a lot.

If you need to develop a chatbot and you don't know where to start, don't hesitate to contact us. Greetings

It's time to take your business to the next level!

About Us

We provide a complete service to guide our clients to they expected final software through a personalized experience in the software development process

CONTACT NOWContact Us

- Luis Lamas 3387. Montevideo. Uruguay

- (+598) 95390490

- info@thecodelight.com

- Week Days: 09:00 to 18:00, Sunday: Closed